sleepAffirmation_1.Sleep fluids (lofi, sleep, study, chill)

How have you been sleeping recently? sleepAffirmation_1.Sleep fluids (lofi, sleep, study, chill) is a multimedia, audio-visual installation which both critiques and emulates mindfulness and wellness products.

Produced by Katie Tindle

Introduction

At the beginning of the year, as lock-down came into force in the UK as a result of the Corona Virus pandemic, I struggled to get to sleep. Around the same time I began receiving more advertisements for products and services which claim to help you get to sustained sleep. There is an abundance of products available which appeared to proliferate during the early stages of lockdown. Apps like headspace and Calm offer guided meditations, Amazons audiobook subscription service Audible provide gives you access to bed time stories aimed at adults and read by celebrities, and Spotify gives listeners access to a plethora of audio books, song playlists and mediations, both commercial and user generated, to name a few. These services promise to enable deep, restorative relaxation and rest, however they also require the user to remain plugged in and commercially engaged throughout the night. Users absorb restful imperatives unconsciously (if they do indeed send you to sleep), or else use a light emitting device before or in bed, something that the NHS recommends you not do if you are struggling to sleep. This piece is in some ways a direct response to my experience of this period and the claims made by these products. I both wanted them to be work for me personally but wrestled with the question of, regardless of whether they are affective, it is worth letting your exchange with Amazon bleed into your unconscious?

My work here takes these questions to an extreme. If they really do work can they work too well? Is it possible to become sodden and over-saturated with sleep? In a society where well-ness is both a consumable and a moral imperative (Sontag, 2002) , can one be a relaxation glutton, and what happens when it is consumed in excess?

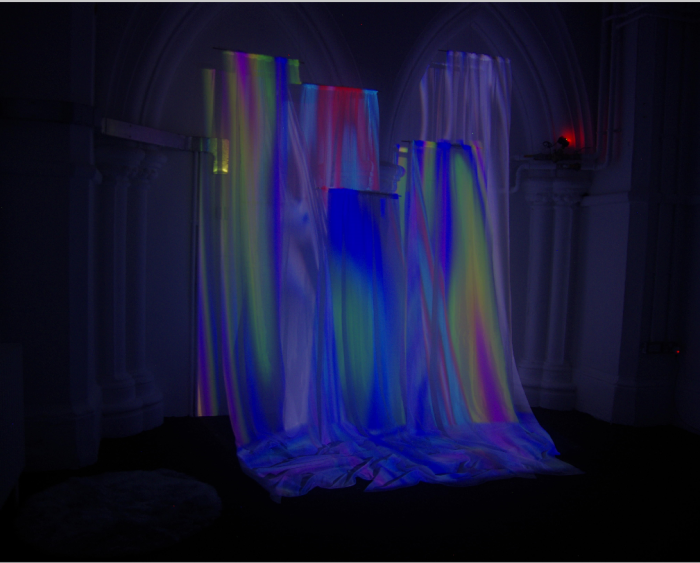

The installation is a combination of projected garish, generative fluids written in GLSL fragment shaders, sickly lavender scents and a spoken word sound piece intoning ‘calming’ poetry co-written with the machine learning algorithm GPT2, to take create a relaxation delivery device which distills calming ambience to a point which verges on sickening.

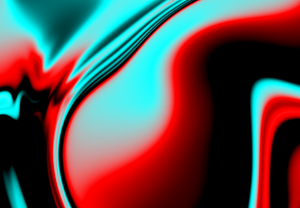

Example of garish fluid like shader written in GLSL

Constrained writing and machine learning

Creative writing and poetics have been part of my artistic practice for a number of years. Increasingly, I’ve become more aware of how integral writing is to my practice and have used explorative writing methodologies, along with sketching, when developing projects. These methodologies were significantly developed during a residency I was a part of during the summer months, organised between Goldsmiths and Arebyte Gallery. They involve setting arbitrary rules, for example not using the letter ‘O’, and time constraints to mimic an algorithmic processes. This forces the me to be inventive, but also removes some of the pressure of a blank page. This practice could be considered reminiscent of avant garde constrained writing systems used by groups like Oulipo in the 1960s. I used these techniques iteratively over the course of making this work to explore and identify my key concerns, the practice compelling me to distill my thoughts despite constraints. I also used this technique to co-write the text which formed the basis of the spoken word piece in the installation. This text was co-written with the machine learning language model GPT-2.

GPT-2 is a text generation model released by OpenAI in 2019. There are three versions of GPT-2, small, medium and large, but all are unwieldly, the smallest version using too much memory when finetuning for a consumer GPU. However, it can be used thanks to intrepid data scientist Max Woolf, who has created a Google Colab Colaboratory Notebook which allows you to train your own model with a custom data set.

Examples of short generated texts

Text generated using GPT-2

To create my data set I used Python and Beautiful Soup to scrape sleep affirmations and stories from the internet and Spotipy Api Library to pull the contexts and lyrics from sleep playlists. My first stage was to train the model on this text, then generate sections of prose. The algorithm works by predicting the next most likely character in a string. In this way certain particularly common phrases and words from the data set are drawn out. In this case imagery relating to water (rivers, oceans, rain) were particularly common.

Fluid-like shader written in GLSL

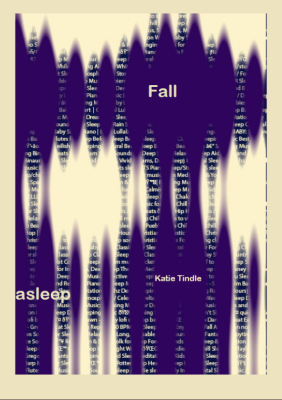

I then generated short phrases to use as create writing prompts and to weave into the text itself. In this way I shadowed rhetorical devices emergent from the texts used in the apps I was critiquing. Rather than being didactic and reach a conclusion about whether or not these products were good for you, I wanted to complicate my relationship with these technologies and remain entangled with them. I also tried to acknowledge the collaboration with GPT with nods to its artificiality – using glitches and repeats in the prose. The full text as recorded for the installation is available to read here and was accessible via QR code during the exhibition. The delivery of the text itself, as heard in the video above, is based on the tone of the guided mediations used to train the machine learning model. In the early stages of developing the work I created an artist book to act as a record of the process. In it are some early writing experiments and imagery made with fragment shaders.

View the book here.

Shaders

When designing the installation I wanted to mimic some of the fluid imagery emergent in the text.

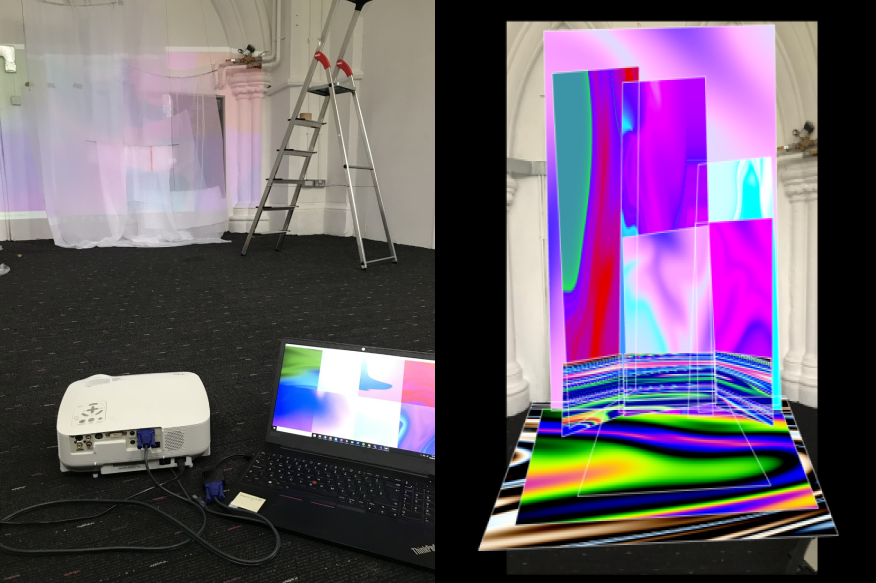

To achieve this I used GLSL fragment shaders. As fragment shaders are parallel GPU rendered I was able to generate very smooth and complex imagery in a relatively light programme. The images themselves are designed to skirt a line between the natural (in terms of movement and similarity to water or oil) and the garish and artificial colour palette. They were projection mapped onto the screens, using ofXPiMapper, an openFrameworks add-on.

An early prototype of the installation.

My intention was to envelop the viewer in these rich smooth, churning images. To achieve this I designed a series of hanging screens or curtains using voile, dowel and thin gold chain. I arranged them in such a way to create depth and texture, and reflect the ripple effects in the shaders.

These curtains were also heavily scented with dried lavender and lavender oil, overpowering the room with natural sleep aids (although it wasn’t as strong as I’d hoped when viewed wearing a face mask).

Detail of the installation. Lavender and glass pebbles are just visible in the image.

When designing this installation, I was influenced by installation artists like Pipilotti Rist and Heather Phillipson who create immersive at times overwhelming set pieces, as well as the vivid corporeal imagery of surrealist feminist author Angela Carta.

Reflections

This work was made during the Covid-19 pandemic, which meant we did not have the same technical support we would normally have had access to. This meant I was not able to install my curtains in to the (very high) ceiling with the help of a technician, but compromised by hanging the work myself from the wall. I was able to create some of the depth I intended, but had conditions been ideal I would have liked to install in such a way that the viewer could step into a curtained area, and sit, fully enclosed in the projections.

This being said, I am proud of what I was able to achieve with this work. I had never written shaders before, which although it is a c flavoured language was not something with which I was immediately comfortable. Nor had I used GTP-2 but was able to employ it effectively to the extent I was able to productively experiment with training sets. Each artistic choice was made carefully and deliberately and I think this gives the work a solid contextual underpinning. The atmosphere in the space was calming and quiet, but belied a critical message which was my intention.

Resources

Lewis Lepton shader tutorial series

Book of shaders by Patricio Gonzalez Vivo and Jen Lowe

Beautiful Soup Python library for pulling data out of HTML and XML files

Spotipy Python library for the Spotify Web API

Ofxpimapper is projection mapping software and addon to openFrameworks.

OpenAI GPT-2 is a text generation model

Max Woolf, Google Colab Colaboratory