Genetic - Drum - Machine

The Genetic Drum-Machine combines computational genetics and a sample sequencer to create a generative tool for music. The original idea came to me whilst listening to the drum beats in songs and realizing that most of the exciting parts of their loops would be the random, slightly awkward and perfect beats placed around a solid groove. This is where the generative element comes into its own, as it doesn’t just let you merge and combine the beats, but it also adds that element of randomness in. It allows you to push your beat that one bit further, letting you explore quickly through the genetically generated rhythms and with reasonably good control.

produced by: George Simms

Interface

The TouchOSC interface in combination with a screen/comp work together and create four separate menu screens, ‘Recording’, ‘Breeding’, ‘Settings’ and ‘Help’, each with their own individual visualization on the screen and TouchOSC layout. The two create a smooth and easy to navigate UI for the Genetic Drum-Machine with simple instructions throughout.

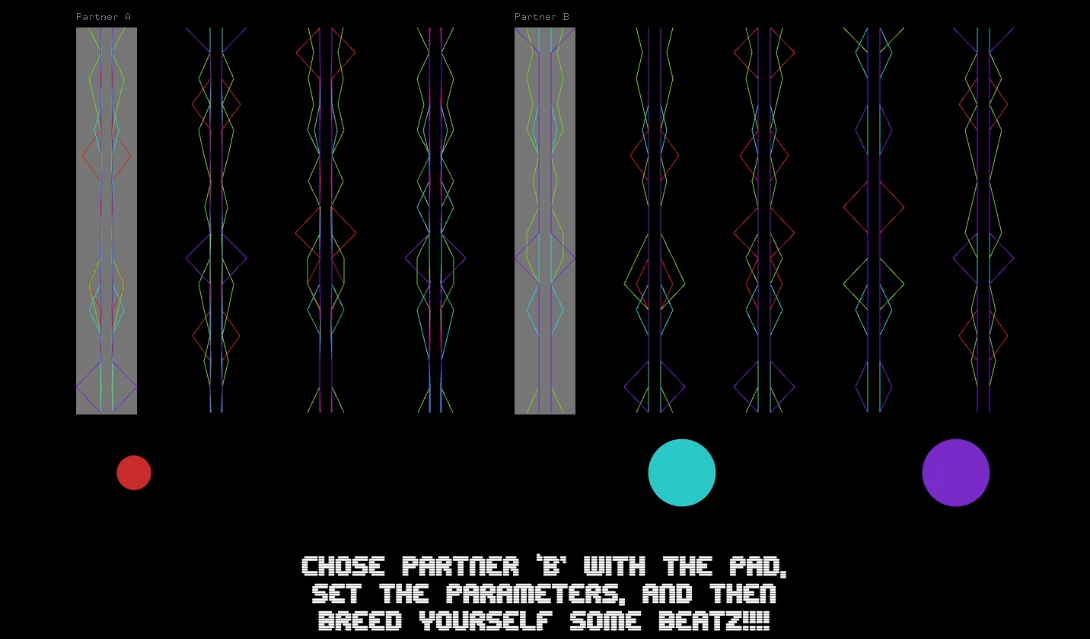

Genetic Visualization

For the visualization of the beats/DNA I wanted to create something that represented this relationship but also created a new language or way of understanding the codes. I wanted it to be very easy to comprehend at first glance, letting anyone be able to draw similarities between beats/DNA and chose their selection on that level. The structure uses the very nature of the DNA (a vector of floats) to prescribe the x point of the polyline as it travels across the y axis or point in vector. The different colours represent the different layers of instruments/DNA and with the emergence of all these elements it creates a bespoke visualization of the beat. This means of representation also allows for a more detailed reading, with even the subtlest of differences being easily noted.

Sample Sequencer

I hardcoded the sequencer myself, with little reference to other models but it seems to work fine. It uses the structure of DNA as its encoder, which is a vector of floats between 0 and 1, I chose the vector size to be 16 to represent 16 beats. It runs over the DNA taking each part of the vector to represent 1 of 4 sounds or silence, and then uses its place in the vector to represent its position in the loop. It combines 4 of these sets of DNA, each holding the data for each level of the instrument; I did this as it creates smoother transitions by limiting the amount of samples on each DNA and increasing the number of DNA. Each set of DNA is also assigned a vector of sounds/samples, and the value of that beat (float 0-1) dictates which sample is activated or if any are.

Computational Genetics

This element is one of the most exciting bits as its emergent behavior always brings around some great and unexpected results. I went off a basic genetics model we received in class and reworked it to make it more subtle and suitable for beat making. One of the major changes that I made was that I used a deque to hold the sets of DNA so that they would be stored linearly and be temporal, this was to keep the participant always exploring and allowing a way to store the DNA smoothly. I made the merging element of the genetics more sophisticated by making it decide individually which beat is to be taken from which parent instead of taking chunks. Its interactive elements allow you to dictate the bias it has towards its parents, as in which one it takes more attributes from, as well as dictating which beat you want to combine with the beat that’s playing currently. With the mutation I modified it so you have more control, you not only have the primary level allowing you to choose how much it will mutate, but now a second parameter that dictates how big the step can be when it does mutate. I found this gave a lot more control to the mutation and enabled a more organic additive approach instead of a totally random mutation; basically, it means you can have lots of small mutations or few large etc.

Future Improvements

I was wanting to make this project slightly less self-contained, so I would like to push it to be linkable to other programs via midi or using ofxAbeltonLink or something similar. Making it a tool for playing live and seamlessly linked would bring greater range of possibilities and I feel it would be generally a great tool. Letting you use a music interface to do most of the sound generation and use this primarily for the beat generation and maybe effects control. It’s generative capability mean it can handle complex rhythms fluidly, especially when you increase it to 16ths, so I would find it rewarding to see how far people can push it and what they can make.

References

http://www.yeeking.net/evosynth/ - genetic synth by Mathew Yee-King

https://experiments.withgoogle.com/drum-machine - infinite drum machine By Manny Tan & Kyle McDonald